Kernel Methods in Machine Learning

This course covers basic concepts in machine learning in high dimension, and the importance of regularization. We study in detail high-dimensional linear models regularized by the Euclidean norm, including ridge regression, ridge logistic regression and support vector machines. We then show how positive definite kernels allows to transform these linear models into rich nonlinear models, usable even for non-vectorial data such as strings and graphs, and convenient for integrating heterogeneous data.

Slides

Practical sessions

TP typically consist of mathematical exercises and hands-on sessions with scikit-learn.

- TP1 - Notes on basics of linear algebra and matrix calculus.

- TP2 - Exercises to design an algorithm based on IRLS to solve the ridge logistic regression.

- TP3 - Implementation of a solver for ridge logistic regression.

- TP4 - Practical exercises on linear SVM and model selection.

- TP5 - Practical exercises on nonlinear SVM and Nyström approximation.

Quizz

Corrections to Quiz #1 and Quiz #2.

Project

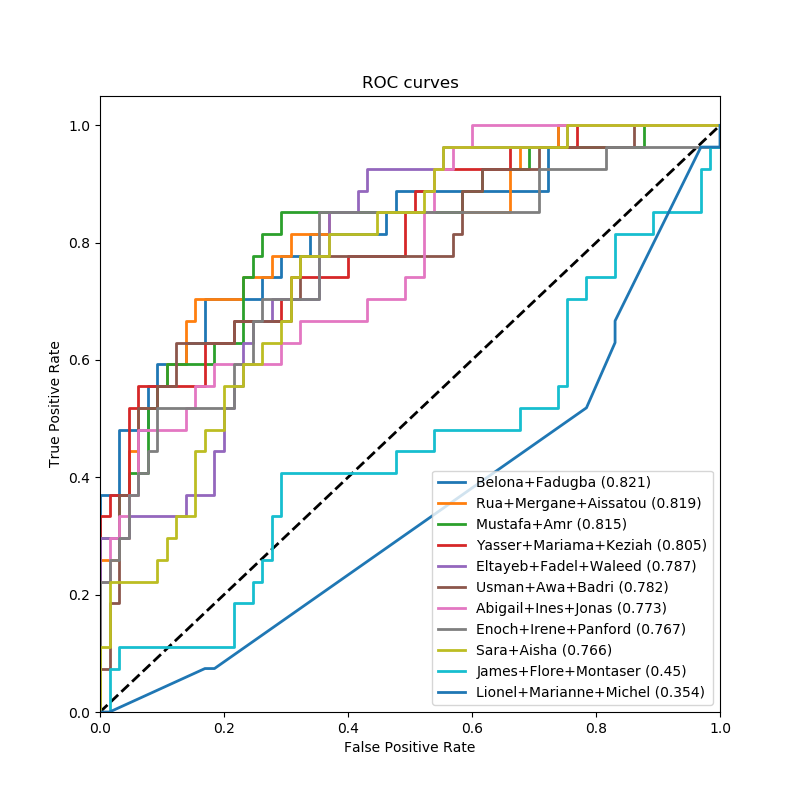

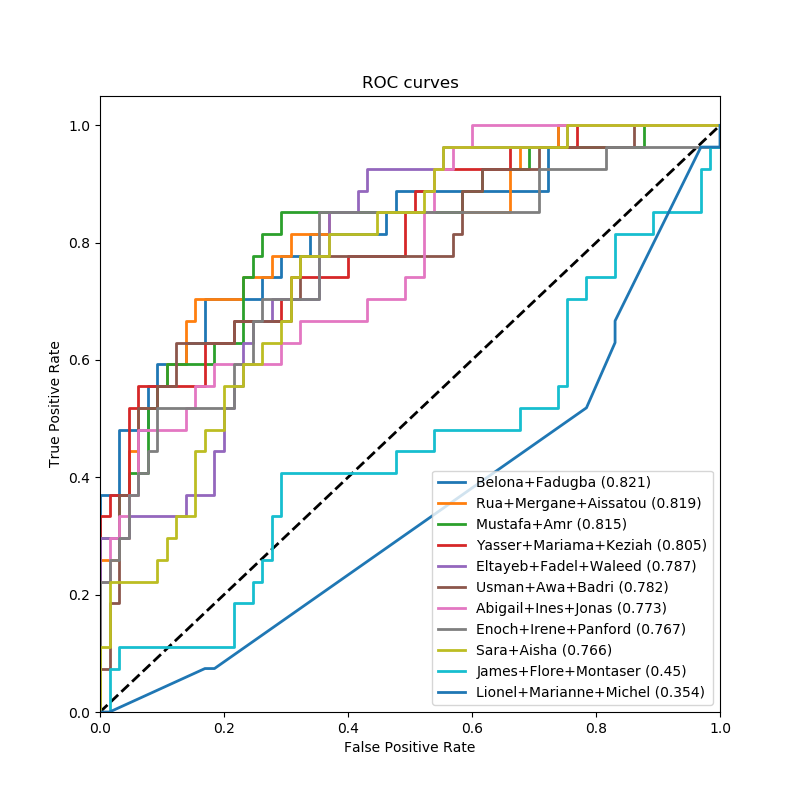

We have collected gene expression levels for 4654 genes on 184 early-stage breast cancer samples: xtrain.txt (each row is a gene, each column a sample). After surgical removal of the tumour, some unfortunately relapsed within 5 years (label=+1), while other did not (label=-1). The labels of the the 184 samples are available in the file ytrain.txt.

- Propose and test different techniques to predict the relapse from gene expression data. Check the effect of parameters, estimate the performance.

- Make a prediction of relapse for the following 92 samples: xtest.txt.

Please send your prediction file to kernel.ammi2018@gmail.com before Friday, February 1st, 1pm. Your prediction file should be a text file called "teamname.txt", with 92 lines, each line containing a real-valued score which should be large if you think the corresponding sample has label +1 (relapse), small otherwise. The predictions will be scored in terms of area under the ROC curve (AUC).

In addition, prepare a 5 minutes presentation to explain what you did. The presentations will take place Friday afternoon 2-4pm.

You are encouraged to work in teams of max 3 students.

Results

Evaluation

The final mark will be an average of the quizz results (30%+30%), of the project presentation (20%), and of the project prediction performance (20%).